Any OpenAI-Compatible

Works with Ollama, LM Studio, vLLM, Together AI, and any OpenAI API format. Perfect for on-premise deployments where data stays on your infrastructure.

Not locked into one provider. Use Claude, Gemini, or run Ollama on-premise. Connect any OpenAI-compatible endpoint. Your models, your rules.

Full control over which AI model powers each employee.

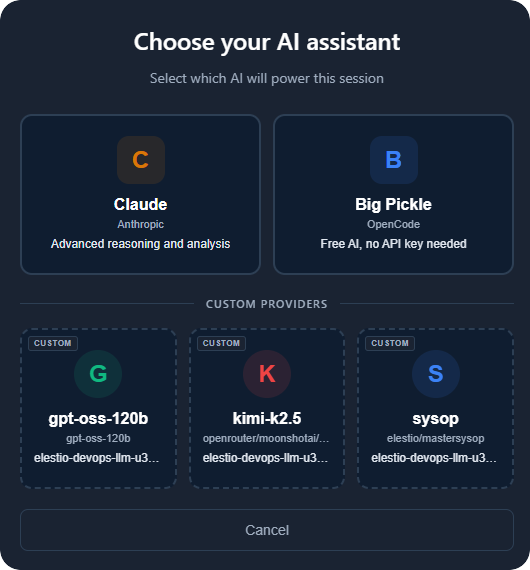

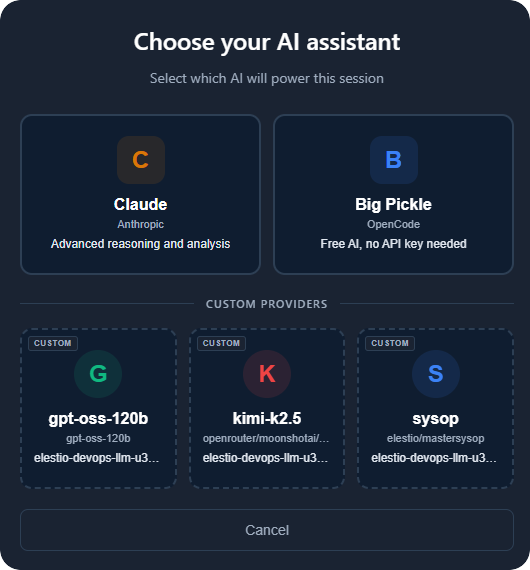

When starting a new session, pick from Claude (Anthropic), Google Gemini, or any custom provider you have added - including Ollama for on-premise deployments.

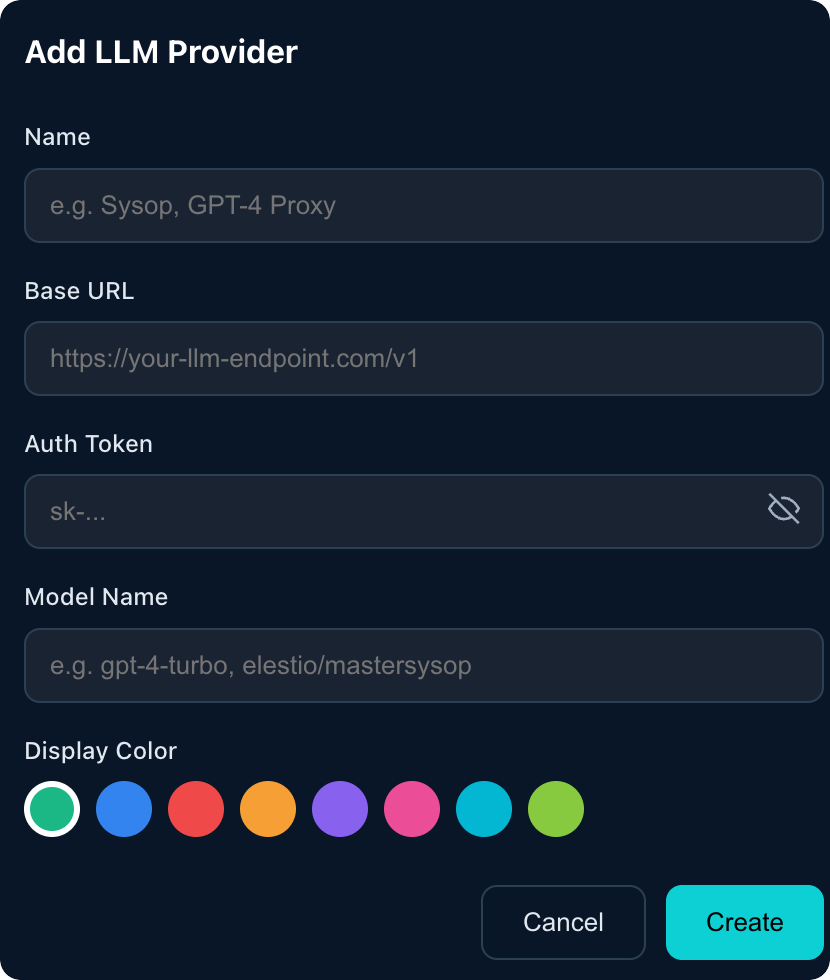

Add unlimited custom LLM providers. Configure Name, Base URL, Auth Token, Model Name, and Display Color. All API keys are encrypted.

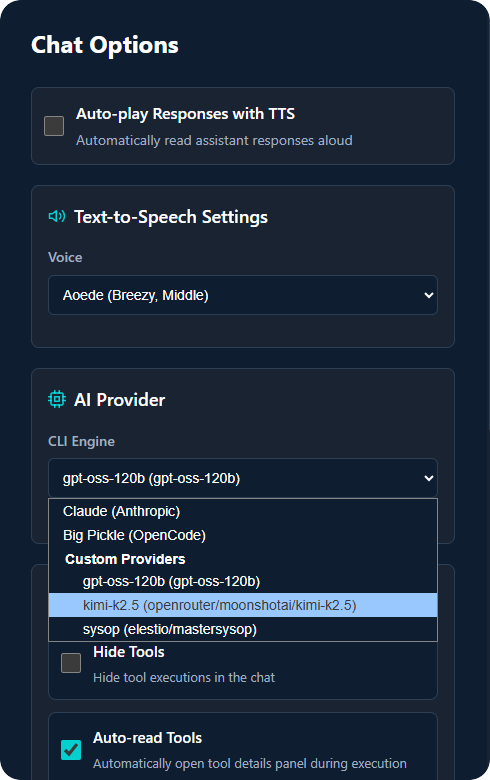

Switch between providers mid-conversation from Chat Options. Different employees can use different models based on their needs.

Full flexibility, zero lock-in.

Works with Ollama, LM Studio, vLLM, Together AI, and any OpenAI API format. Perfect for on-premise deployments where data stays on your infrastructure.

API keys stored with encryption. Only admins can manage providers. Your credentials are safe.

Assign different models to different employees. GPT for writing, Claude for analysis.

Switch providers anytime. No data loss, no migration. Conversations persist across changes.

Real reasons to use custom LLM providers.

Use affordable models for simple tasks, premium ones for complex reasoning. Some providers cost a fraction of others. Route intelligently to optimize costs.

Connect to self-hosted models. Your data never leaves your infrastructure. Perfect for regulated industries.

Test different models on the same tasks to find the best fit. Switch mid-conversation. No commitment.

Use domain-specific fine-tuned models. Medical, legal, financial - plug in the right model for the job.

Use any model, any provider, any endpoint. Zero vendor lock-in.